Chain of [draft] // Better than CoT

Chain of Draft // a concise reasoning prompting strategy inspired by human cognitive processes

Chain-of-Draft prompting condenses the reasoning process into minimal, abstract representations.

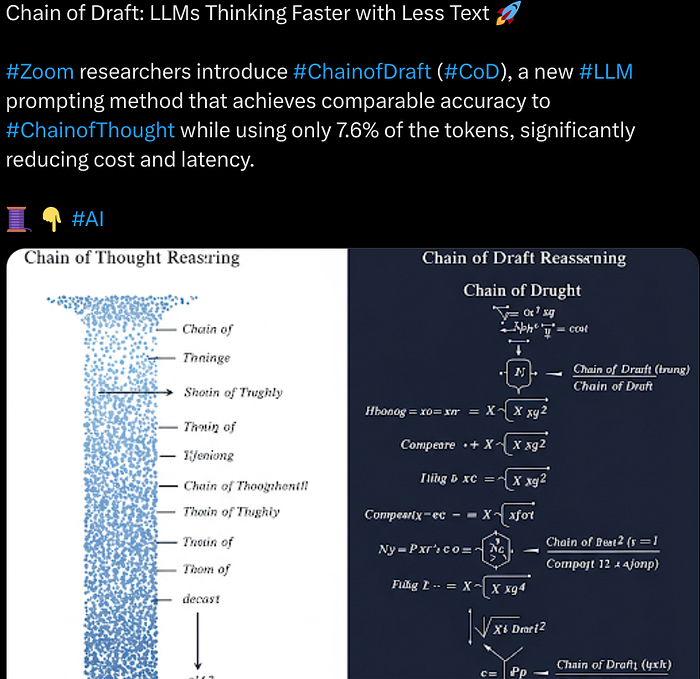

Large Language Models (LLMs) have demonstrated remarkable performance in solving complex reasoning tasks through mechanisms like Chain-of-Thought (CoT) prompting, which emphasizes verbose, step-by-step reasoning. However, humans typically employ a more efficient strategy: drafting concise intermediate thoughts that capture only essential information. In this work, we propose Chain of Draft (CoD), a novel paradigm inspired by human cognitive processes, where LLMs generate minimalistic yet informative intermediate reasoning outputs while solving tasks. By reducing verbosity and focusing on critical insights, CoD matches or surpasses CoT in accuracy while using as little as only 7.6% of the tokens, significantly reducing cost and latency across various reasoning tasks.

Chain-of-Thought

Q: Jason had 20 lollipops. He gave Denny some

lollipops. Now Jason has 12 lollipops. How many

lollipops did Jason give to Denny?

A: Let’s think through this step by step:

1. Initially, Jason had 20 lollipops.

2. After giving some to Denny, Jason now has 12

lollipops.

3. To find out how many lollipops Jason gave to

Denny, we need to calculate the difference between

the initial number of lollipops and the remaining

number.

4. We can set up a simple subtraction problem:

Initial number of lollipops - Remaining number of

lollipops = Lollipops given to Denny

5. Putting in the numbers: 20 - 12 = Lollipops given

to Denny

6. Solving the subtraction: 20 - 12 = 8

Therefore, Jason gave 8 lollipops to Denny.

####

8 lollipopsChain-of-Draft

Q: Jason had 20 lollipops. He gave Denny some

lollipops. Now Jason has 12 lollipops. How many

lollipops did Jason give to Denny?

A: 20 - x = 12; x = 20 - 12 = 8. #### 8The latency issue has often been overlooked in studies of the reasoning capabilities of LLMs. However, it is crucial for lots of real-time applications to have low latency while maintaining high-quality responses. In this work, we propose Chain of Draft (CoD), a novel approach that substantially reduces the latency required for reasoning while achieving comparable or even superior accuracy compared to standard Chain-of-Thought prompting strategies. Unlike traditional methods that often involve lengthy reasoning steps, CoD leverages concise reasoning drafts to speed up response generation without sacrificing correctness. Additionally, CoD offers significant cost advantages. By compacting the reasoning steps, it reduces the number of input tokens required for few-shot prompting and shortens the output token length, directly lowering computational cost. This token efficiency makes CoD especially appealing in cost-sensitive scenarios, such as large-scale deployments of LLMs or applications with strict budget constraints. CoD demonstrates that effective reasoning in LLMs does not necessarily require lengthy outputs, offering an alternative approach where reasoning depth is maintained with minimal verbosity. Future work could explore combining CoD with other latency-reducing methods, such as adaptive parallel reasoning or multi-pass validation, to further optimize performance across different application domains. In addition, the principles behind the compact reasoning of CoD could inspire new strategies to improve reasoning models by training with compact reasoning data, while maintaining interpretability and efficiency in LLMs, helping bridge the gap between research-driven improvements in reasoning and the practical demands of real world systems.